Dr. Albert Hsiao and his colleagues on the University of California-San Diego well being system had been working for 18 months on an artificial intelligence program designed to assist docs establish pneumonia on a chest X-ray. When the coronavirus hit the United States, they determined to see what it might do.

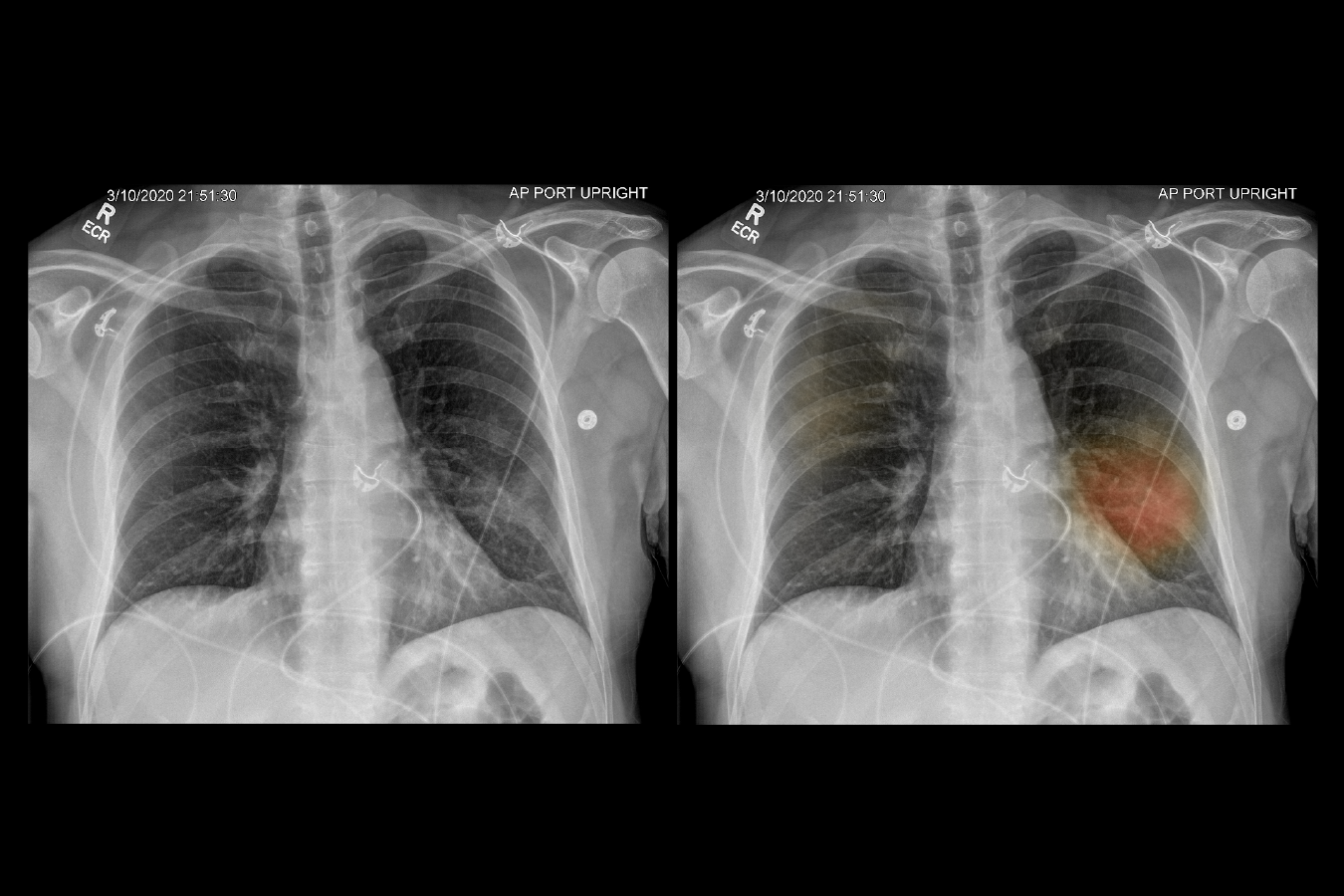

The researchers rapidly deployed the appliance, which dots X-ray pictures with spots of colour the place there could also be lung injury or different indicators of pneumonia. It has now been utilized to greater than 6,000 chest X-rays, and it’s offering some worth in prognosis, mentioned Hsiao, the director of UCSD’s augmented imaging and synthetic intelligence information analytics laboratory.

His group is one in every of a number of across the nation that has pushed AI applications developed in a calmer time into the COVID-19 disaster to carry out duties like deciding which sufferers face the best danger of issues and which could be safely channeled into lower-intensity care.

Email Sign-Up

Subscribe to KHN’s free Morning Briefing.

The machine-learning applications scroll by way of thousands and thousands of items of knowledge to detect patterns that could be arduous for clinicians to discern. Yet few of the algorithms have been rigorously examined in opposition to normal procedures. So whereas they typically seem useful, rolling out the applications within the midst of a pandemic could possibly be complicated to docs and even harmful for sufferers, some AI specialists warn.

“AI is being used for things that are questionable right now,” mentioned Dr. Eric Topol, director of the Scripps Research Translational Institute and creator of a number of books on well being IT.

Topol singled out a system created by Epic, a serious vendor of digital well being data software program, that predicts which coronavirus sufferers could turn out to be critically in poor health. Using the device earlier than it has been validated is “pandemic exceptionalism,” he mentioned.

Epic mentioned the corporate’s mannequin had been validated with information from extra 16,000 hospitalized COVID-19 sufferers in 21 well being care organizations. No analysis on the device has been printed, however, in any case, it was “developed to help clinicians make treatment decisions and is not a substitute for their judgment,” mentioned James Hickman, a software program developer on Epic’s cognitive computing group.

Others see the COVID-19 disaster as a chance to be taught concerning the worth of AI instruments.

“My intuition is it’s a little bit of the good, bad and ugly,” mentioned Eric Perakslis, a knowledge science fellow at Duke University and former chief data officer on the Food and Drug Administration. “Research in this setting is important.”

Nearly $2 billion poured into corporations touting developments in well being care AI in 2019. Investments within the first quarter of 2020 totaled $635 million, up from $155 million within the first quarter of 2019, in line with digital well being know-how funder Rock Health.

At least three well being care AI know-how corporations have made funding offers particular to the COVID-19 disaster, together with Vida Diagnostics, an AI-powered lung-imaging evaluation firm, in line with Rock Health.

Overall, AI’s implementation in on a regular basis scientific care is much less widespread than hype over the know-how would recommend. Yet the coronavirus disaster has impressed some hospital methods to speed up promising purposes.

UCSD sped up its AI imaging mission, rolling it out in solely two weeks.

Hsiao’s mission, with analysis funding from Amazon Web Services, the University of California and the National Science Foundation, runs each chest X-ray taken at its hospital by way of an AI algorithm. While no information on the implementation has been printed but, docs report that the device influences their scientific decision-making a couple of third of the time, mentioned Dr. Christopher Longhurst, UC San Diego Health’s chief data officer.

“The results to date are very encouraging, and we’re not seeing any unintended consequences,” he mentioned. “Anecdotally, we’re feeling like it’s helpful, not hurtful.”

AI has superior additional in imaging than different areas of scientific medication as a result of radiological pictures have tons of knowledge for algorithms to course of, and extra information makes the applications simpler, mentioned Longhurst.

But whereas AI specialists have tried to get AI to do issues like predict sepsis and acute respiratory misery — researchers at Johns Hopkins University recently won a National Science Foundation grant to make use of it to foretell coronary heart injury in COVID-19 sufferers — it has been simpler to plug it into much less dangerous areas similar to hospital logistics.

In New York City, two main hospital methods are utilizing AI-enabled algorithms to assist them resolve when and the way sufferers ought to transfer into one other part of care or be despatched house.

At Mount Sinai Health System, a man-made intelligence algorithm pinpoints which sufferers could be able to be discharged from the hospital inside 72 hours, mentioned Robbie Freeman, vp of scientific innovation at Mount Sinai.

Freeman described the AI’s suggestion as a “conversation starter,” meant to assist help clinicians engaged on affected person instances resolve what to do. AI isn’t making the choices.

NYU Langone Health has developed an identical AI mannequin. It predicts whether or not a COVID-19 affected person getting into the hospital will endure opposed occasions inside the subsequent 4 days, mentioned Dr. Yindalon Aphinyanaphongs, who leads NYU Langone’s predictive analytics group.

The mannequin might be run in a four- to six-week trial with sufferers randomized into two teams: one whose docs will obtain the alerts, and one other whose docs won’t. The algorithm ought to assist docs generate a listing of issues that will predict whether or not sufferers are in danger for issues after they’re admitted to the hospital, Aphinyanaphongs mentioned.

Some well being methods are leery of rolling out a know-how that requires scientific validation in the course of a pandemic. Others say they didn’t want AI to cope with the coronavirus.

Stanford Health Care isn’t utilizing AI to handle hospitalized sufferers with COVID-19, mentioned Ron Li, the middle’s medical informatics director for AI scientific integration. The San Francisco Bay Area hasn’t seen the expected surge of patients who would have offered the mass of knowledge wanted to verify AI works on a inhabitants, he mentioned.

Outside the hospital, AI-enabled danger issue modeling is getting used to assist well being methods monitor sufferers who aren’t contaminated with the coronavirus however could be inclined to issues in the event that they contract COVID-19.

At Scripps Health in San Diego, clinicians are stratifying sufferers to evaluate their danger of getting COVID-19 and experiencing extreme signs utilizing a risk-scoring mannequin that considers components like age, continual situations and up to date hospital visits. When a affected person scores 7 or larger, a triage nurse reaches out with details about the coronavirus and should schedule an appointment.

Though emergencies present distinctive alternatives to check out superior instruments, it’s important for well being methods to make sure docs are snug with them, and to make use of the instruments cautiously, with intensive testing and validation, Topol mentioned.

“When people are in the heat of battle and overstretched, it would be great to have an algorithm to support them,” he mentioned. “We just have to make sure the algorithm and the AI tool isn’t misleading, because lives are at stake here.”

This KHN story first printed on California Healthline, a service of the California Health Care Foundation.

Related Topics California Public Health States COVID-19 Health IT